AutoGPT Agent setup¶

🐋 Set up & Run with Docker | 👷🏼 For Developers

📋 Requirements¶

Linux / macOS¶

- Python 3.10 or later

- Poetry (instructions)

Windows (WSL)¶

- WSL 2

- See also the requirements for Linux

- Docker Desktop

Windows¶

Attention

We recommend setting up AutoGPT with WSL. Some things don't work exactly the same on Windows and we currently can't provide specialized instructions for all those cases.

- Python 3.10 or later (instructions)

- Poetry (instructions)

- Docker Desktop

Setting up AutoGPT¶

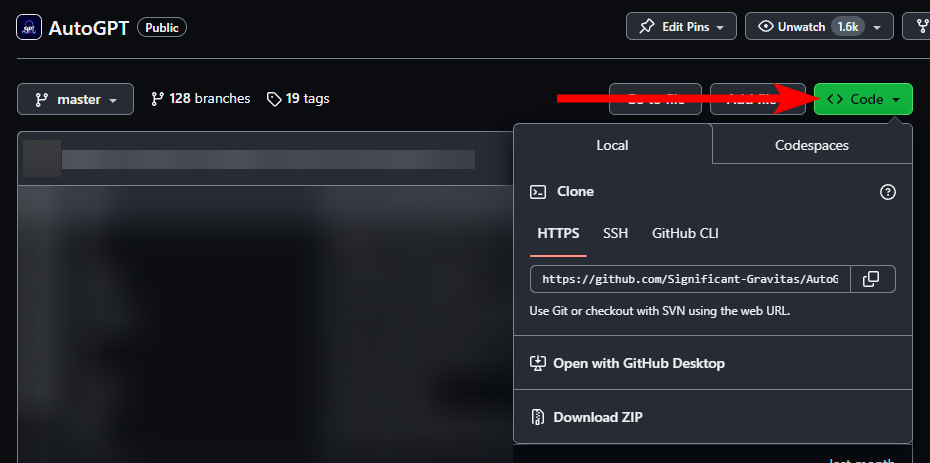

Getting AutoGPT¶

Since we don't ship AutoGPT as a desktop application, you'll need to download the project from GitHub and give it a place on your computer.

- To get the latest bleeding edge version, use

master. - If you're looking for more stability, check out the latest AutoGPT release.

Note

These instructions don't apply if you're looking to run AutoGPT as a docker image. Instead, check out the Docker setup guide.

Completing the Setup¶

Once you have cloned or downloaded the project, you can find the AutoGPT Agent in the

autogpt/ folder.

Inside this folder you can configure the AutoGPT application with an .env file and (optionally) a JSON configuration file:

.envfor environment variables, which are mostly used for sensitive data like API keys- a JSON configuration file to customize certain features of AutoGPT's Components

See the Configuration reference for a list of available environment variables.

- Find the file named

.env.template. This file may be hidden by default in some operating systems due to the dot prefix. To reveal hidden files, follow the instructions for your specific operating system: Windows and macOS. - Create a copy of

.env.templateand call it.env; if you're already in a command prompt/terminal window:cp .env.template .env - Open the

.envfile in a text editor. - Set API keys for the LLM providers that you want to use: see below.

-

Enter any other API keys or tokens for services you would like to use.

Note

To activate and adjust a setting, remove the

#prefix. -

Save and close the

.envfile. - Optional: run

poetry installto install all required dependencies. The application also checks for and installs any required dependencies when it starts. - Optional: configure the JSON file (e.g.

config.json) with your desired settings. The application will use default settings if you don't provide a JSON configuration file. Learn how to set up the JSON configuration file

You should now be able to explore the CLI (./autogpt.sh --help) and run the application.

See the user guide for further instructions.

Setting up LLM providers¶

You can use AutoGPT with any of the following LLM providers. Each of them comes with its own setup instructions.

AutoGPT was originally built on top of OpenAI's GPT-4, but now you can get similar and interesting results using other models/providers too. If you don't know which to choose, you can safely go with OpenAI*.

* subject to change

OpenAI¶

Attention

To use AutoGPT with GPT-4 (recommended), you need to set up a paid OpenAI account with some money in it. Please refer to OpenAI for further instructions (link). Free accounts are limited to GPT-3.5 with only 3 requests per minute.

- Make sure you have a paid account with some credits set up: Settings > Organization > Billing

- Get your OpenAI API key from: API keys

- Open

.env - Find the line that says

OPENAI_API_KEY= -

Insert your OpenAI API Key directly after = without quotes or spaces:

OPENAI_API_KEY=sk-proj-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxUsing a GPT Azure-instance

If you want to use GPT on an Azure instance, set

USE_AZUREtoTrueand make an Azure configuration file.Rename

azure.yaml.templatetoazure.yamland provide the relevantazure_api_base,azure_api_versionand deployment IDs for the models that you want to use.E.g. if you want to use

gpt-3.5-turboandgpt-4-turbo:# Please specify all of these values as double-quoted strings # Replace string in angled brackets (<>) to your own deployment Name azure_model_map: gpt-3.5-turbo: "<gpt-35-turbo-deployment-id>" gpt-4-turbo: "<gpt-4-turbo-deployment-id>" ...Details can be found in the openai/python-sdk/azure, and in the [Azure OpenAI docs] for the embedding model. If you're on Windows you may need to install an MSVC library.

Important

Keep an eye on your API costs on the Usage page.

Anthropic¶

- Make sure you have credits in your account: Settings > Plans & billing

- Get your Anthropic API key from Settings > API keys

- Open

.env - Find the line that says

ANTHROPIC_API_KEY= - Insert your Anthropic API Key directly after = without quotes or spaces:

ANTHROPIC_API_KEY=sk-ant-api03-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx - Set

SMART_LLMand/orFAST_LLMto the Claude 3 model you want to use. See Anthropic's models overview for info on the available models. Example:SMART_LLM=claude-3-opus-20240229

Important

Keep an eye on your API costs on the Usage page.

Groq¶

Note

Although Groq is supported, its built-in function calling API isn't mature. Any features using this API may experience degraded performance. Let us know your experience!

- Get your Groq API key from Settings > API keys

- Open

.env - Find the line that says

GROQ_API_KEY= - Insert your Anthropic API Key directly after = without quotes or spaces:

GROQ_API_KEY=gsk_xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx - Set

SMART_LLMand/orFAST_LLMto the Groq model you want to use. See Groq's models overview for info on the available models. Example:SMART_LLM=llama3-70b-8192

Llamafile¶

With llamafile you can run models locally, which means no need to set up billing, and guaranteed data privacy.

For more information and in-depth documentation, check out the llamafile documentation.

Warning

At the moment, llamafile only serves one model at a time. This means you can not

set SMART_LLM and FAST_LLM to two different llamafile models.

Warning

Due to the issues linked below, llamafiles don't work on WSL. To use a llamafile with AutoGPT in WSL, you will have to run the llamafile in Windows (outside WSL).

Instructions

- Get the

llamafile/serve.pyscript through one of these two ways:- Clone the AutoGPT repo somewhere in your Windows environment,

with the script located at

autogpt/scripts/llamafile/serve.py - Download just the serve.py script somewhere in your Windows environment

- Clone the AutoGPT repo somewhere in your Windows environment,

with the script located at

- Make sure you have

clickinstalled:pip install click - Run

ip route | grep default | awk '{print $3}'inside WSL to get the address of the WSL host machine - Run

python3 serve.py --host {WSL_HOST_ADDR}, where{WSL_HOST_ADDR}is the address you found at step 3. If port 8080 is taken, also specify a different port using--port {PORT}. - In WSL, set

LLAMAFILE_API_BASE=http://{WSL_HOST_ADDR}:8080/v1in your.env. - Follow the rest of the regular instructions below.

Note

These instructions will download and use mistral-7b-instruct-v0.2.Q5_K_M.llamafile.

mistral-7b-instruct-v0.2 is currently the only tested and supported model.

If you want to try other models, you'll have to add them to LlamafileModelName in

llamafile.py.

For optimal results, you may also have to add some logic to adapt the message format,

like LlamafileProvider._adapt_chat_messages_for_mistral_instruct(..) does.

- Run the llamafile serve script:

The first time this is run, it will download a file containing the model + runtime, which may take a while and a few gigabytes of disk space.

python3 ./scripts/llamafile/serve.py

To force GPU acceleration, add --use-gpu to the command.

-

In

.env, setSMART_LLM/FAST_LLMor both tomistral-7b-instruct-v0.2 -

If the server is running on different address than

http://localhost:8080/v1, setLLAMAFILE_API_BASEin.envto the right base URL